Wait… this is exactly the problem a video codec solves. Scoot and give me some sample data!

Wait… this is exactly the problem a video codec solves. Scoot and give me some sample data!

I was not talking about classification. What I was talking about was a simple probe at how well a collage of similar images compares in compressed size to the images individually. The hypothesis is that a compression codec would compress images with similar colordistribution in a spritesheet better than if it encode each image individually. I don’t know, the savings might be neglible, but I’d assume that there was something to gain at least for some compression codecs. I doubt doing deduplication post compression has much to gain.

I think you’re overthinking the classification task. These images are very similar and I think comparing the color distribution would be adequate. It would of course be interesting to compare the different methods :)

The first thing I would do writing such a paper would be to test current compression algorithms by create a collage of the similar images and see how that compares to the size of the indiviual images.

Desktop Applications

Unless someone has registered the trademark for those specific purposes you’re clear. A trademarks is only valid within a specific field of purpose. Trademarks are there to avoid consumers mistaking one brand for another.

There are a lot of entertaining articles on Techdirt about companies not understanding trademark law.

Does anyone know of a list of TLDs that don’t allow reselling? I’d prefer to buy/lease one of those and let domain sharks play their own games.

I use gitit and it’s already packaged in most Linux distros.

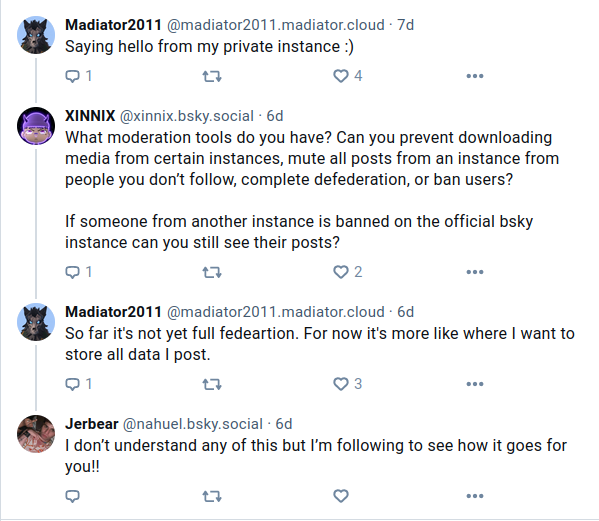

TLDR; Sorta federation. It is possible to selfhost data.

Yeah, that container probably crashed because of atmospheric disturbance.

I use Devuan and it’s just Debian without systemd.

Gothub is looking for a new maintainer.

Fontunately it’s just DNS.

Loop up the domains at one of: ns1.cloudns.net ns2.cloudns.net ns3.cloudns.net ns4.cloudns.net

Aliasing and forwarding is not a good solution if you are concerned about law enforcement, because your personal e-mail is still linked with the tracker, just behind an extra hop and in addition you allow someone in between to read your e-mails. You had the answer yourself. Create a completely fresh free e-mail account somewhere, using as minimum a private tab to prevent tracking data to link anything to the account… and if you can get a free e-mail account with IMAP/POP access so that you can use it in an e-mail client to leak less data, do that.

If you still want to respect user privacy, your analytics software could use the port of the connection instead of IP as the identifier. It would be perfectly fine for determining simultaneus users from the same IP, but not invasive enough to monitor an individuals behaviour. Don’t ask me which analytics software supports that. I’d grab the data from the http logs if it was me and use a tool like goaccess.

Marginalia Search perhabs.

Also these are worth mentioning:

I build a lot of tools like that and the first thing I do is to go to the developer tool in my browser and observe the network traffic. When you find the resource you’re after you scroll back and see what requests resulted in that URL. Going from those requests you figure out in the original static HTML document and resource, which parameters are used for the construction of the URL, that might require reversing some javascript, but that’s rare. After that you’ll have a pretty good idea how you obtain the video resource from the original URL. Beware of cookie set by the requests, they might be needed to access the next requests. For building my tools I use Perl or sometimes just Bash or a GreaseMonkey userscript to fetch and parse the urls and construct the desired output.

It seems that we focus our interest in two different parts of the problem.

Finding the most optimal way to classify which images are best compressed in bulk is an interesting problem in itself. In this particular problem the person asking it had already picked out similar images by hand and they can be identified by their timestamp for optimizing a comparison of similarity. What I wanted to find out was how well the similar images can be compressed with various methods and codecs with minimal loss of quality. My goal was not to use it as a method to classify the images. It was simply to examine how well the compression stage would work with various methods.